Ilman Iqbal's Blog

Kubernetes Made Simple: A Hands-On Guide to Pods, Deployments, Services, Port Forwarding, Ingress, Config Maps and Secrets

Learn how to deploy a complete application stack on Kubernetes, from Pods, Deployments, and Services to Config Maps and Secrets. Discover how to expose apps externally via Ingress, manage traffic with port forwarding and external IPs, and securely handle configurations with ConfigMaps and Secrets—all in a practical, hands-on way.

What is Kubernetes?

Kubernetes (K8s) is a container orchestrator. It runs and manages containers for you and helps with:

- Deployments & rollouts (deploy new versions safely)

- Scaling (add/remove replicas)

- Load balancing traffic to containers

- Health monitoring (restart unhealthy containers)

- Configuration management (ConfigMap) and secret management (Secret)

The Core Architecture (in simple terms)

| Component | What it does |

|---|---|

| Control Plane | The brain (API server, scheduler, controllers) |

| Worker Nodes | Where Pods (containers) actually run |

| etcd | Cluster state store (configs, secrets, cluster metadata) |

| Kubelet & container runtime | Local node agent + runtime (containerd, Docker) |

Using kubectl — the essential commands

kubectl is your remote control for Kubernetes. Use it to inspect, modify, and debug the cluster.

kubectl get — list resources

kubectl get pods

kubectl get deployments

kubectl get services

kubectl get namespaceskubectl describe — show detailed information about a resource

kubectl describe pod my-podkubectl logs — print the logs from a container in a pod

kubectl logs my-podkubectl exec — execute a command on a container in a pod (interactive)

kubectl exec -it my-pod -- /bin/shkubectl get pods -n <namespace>, then use that exact

name in kubectl exec or

kubectl logs.

Contexts

Context controls which cluster and user your kubectl talks to. Always check your context before

applying manifests in production clusters.

kubectl config current-context

kubectl config get-contextsNamespaces

Namespaces are logical project-level divisions inside a cluster (like folders).

kubectl get namespacesTypical default list:

NAME STATUS AGE

default Active 14m

kube-node-lease Active 14m

kube-public Active 14m

kube-system Active 14m| Namespace | Purpose |

|---|---|

| default | Where resources go if no namespace specified |

| kube-system | Kubernetes system services (DNS, metrics) |

| kube-public | Generally empty; readable by all |

| kube-node-lease | Used for node heartbeats |

kubectl create namespace curityLocal clusters: Minikube, MicroK8s, K3s / k3d

For learning and local development you can run Kubernetes in several ways:

- Minikube — single-node cluster in a VM or Docker.

- MicroK8s — lightweight single-node K8s from Canonical. Uses microk8s kubectl.

- K3s — lightweight Kubernetes distribution, often used by k3d.

- k3d — runs K3s inside Docker containers (great for CI and local multi-cluster testing).

kubectl. To be sure you're talking to the MicroK8s cluster,

prepend

microk8s (for example: microk8s kubectl get namespaces). This avoids accidentally using

a different kubeconfig/context on your machine.

Example: create a cluster with k3d

k3d cluster create curity-localCheck contexts

kubectl config get-contextsExample output:

CURRENT NAME CLUSTER AUTHINFO

* k3d-curity-local k3d-curity-local admin@k3d-curity-local

k3d-user-management-local k3d-user-management-local admin@k3d-user-management-localSwitch contexts with:

kubectl config use-context k3d-user-management-localCheck nodes & runtime

kubectl get nodes -o wideThis shows node OS, kernel, and container runtime (e.g. containerd://1.x).

kubectl get nodes -o wide shows node details including the container runtime. If you see

entries like

K3s and containerd this often means a lightweight k3s/k3d cluster running on WSL2 or

Docker.

Deployments & Services — basics and YAML tips

Important: In YAML you can put multiple resource objects in one file separated by three dashes ---. Example: a Deployment and a Service in a single manifest. You can also create separate files (e.g., deployment.yaml and service.yaml).

Why create a Deployment?

A Deployment provides a higher-level management layer for Pods. It ensures your application is resilient, scalable, and easy to update. Key benefits include:

- Ephemeral Pods: If a Pod dies, it's gone. A Deployment automatically recreates Pods to maintain the desired state (self-healing).

- Scaling: You can declaratively set

replicasand Kubernetes will spin up that many Pods and balance traffic across them via the Service. - Declarative updates (Rolling Deployments): You can change container images, resource limits, or environment variables. The Deployment gradually replaces old Pods with new ones without downtime.

- Rollback: Kubernetes keeps track of Deployment revisions. If something goes wrong, you can easily roll back to a previous version.

- Consistency: Every Pod created by the Deployment has the same spec (labels, containers, env vars). This ensures predictable behavior across replicas.

- Portability: You describe your app once in YAML, and Kubernetes makes sure it runs the same way in dev, test, and production clusters.

In short: a Deployment gives you self-healing, scaling, rolling updates, and rollbacks — making it the standard for running apps in Kubernetes.

User-management Deployment example

apiVersion: apps/v1

kind: Deployment

metadata:

name: user-management-app # Unique name of the Deployment

namespace: user-management-app # Namespace to logically isolate this app from others

spec:

replicas: 2 # Number of Pod replicas to maintain

selector:

matchLabels:

app: user-management-app # The Deployment will manage only Pods that have this label

template:

metadata:

labels:

app: user-management-app # This label is added to every Pod created by the Deployment.

# It MUST match the selector above, otherwise the Deployment

# won't recognize its own Pods.

spec:

containers:

- name: user-management-app # Container name inside each Pod

image: user-management:latest # Docker image used by the container

imagePullPolicy: IfNotPresent # Pull image only if not present locally

ports:

- containerPort: 8082 # Port that the container listens on inside the Pod

name: http # Named port — allows Services to refer to it by name instead of port number

env:

- name: EXTERNAL_BACKEND_URL

value: "http://external-backend-svc:8088"

# Pod will call this Service instead of raw Windows IP

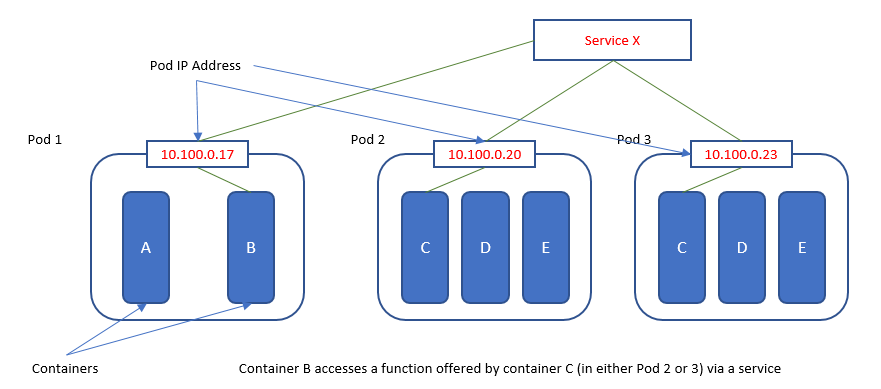

Why create a Service?

A Kubernetes Service gives your Pod(s) a stable DNS name and a ClusterIP. Without a Service, pods get ephemeral IPs so other services can't reliably reach them. Example in-cluster URL:

http://user-management-svc.user-management-app.svc.cluster.local:8082User-management Service example

apiVersion: v1

kind: Service

metadata:

name: user-management-svc # Unique name of the Service

namespace: user-management-app # Namespace to logically isolate this app from others

spec:

selector:

app: user-management-app # Matches Pods with label app=user-management-app

ports:

- protocol: TCP

port: 8082 # The port exposed inside the cluster (cluster-wide virtual IP)

targetPort: http # Forwards to the Pod's containerPort named "http" (8082 above)

type: ClusterIP # Default type; exposes service on an internal cluster IP

Applying the Deployment and Service

You can apply these manifests using kubectl apply. Since the resources specify a namespace,

you can either rely on that or explicitly specify it during apply:

# Apply using the namespace in the manifest

kubectl apply -f user-management-deployment.yaml

kubectl apply -f user-management-service.yaml

# Or explicitly specify the namespace

kubectl apply -f user-management-deployment.yaml -n user-management-app

kubectl apply -f user-management-service.yaml -n user-management-app

Deleting the Deployment or Service

# Delete Deployment

kubectl delete deployment user-management-app -n user-management-app

# Delete Service

kubectl delete svc user-management-svc -n user-management-app

Restarting the Deployment

To restart all Pods in a Deployment (for example, to pick up a new image or configuration):

kubectl rollout restart deployment user-management-app -n user-management-app

# Check rollout status

kubectl rollout status deployment user-management-app -n user-management-app

Scaling the Deployment

kubectl scale deployments/user-management-app --replicas=4

This command scales the Deployment to 4 replicas (Pods). The associated Service will automatically load-balance requests across all replicas.

Types of Kubernetes Services

- ClusterIP (default): Exposes the Service on an internal IP in the cluster.

This makes the Service reachable only from within the cluster.

Used for: Internal microservice-to-microservice communication. - NodePort: Exposes the Service on each Node's IP at a static port (between 30000–32767).

Used for: Simple external access to a Service, mainly for dev/test environments. - LoadBalancer: Exposes the Service externally using a cloud provider's load balancer.

Used for: Production environments in cloud (AWS ELB, GCP LB, Azure LB). - ExternalName: Maps a Service to an external DNS name (e.g., a legacy system or DB).

Used for: Accessing external services as if they were inside the cluster.

Understanding ClusterIP

When you create a Service, Kubernetes automatically assigns it a ClusterIP — a virtual IP address that exists only inside the cluster. You don't define this IP in your Service manifest; Kubernetes picks one for you.

Any Pod inside the same cluster can reach your Service using its DNS name. For example, if you have a

Service called my-service in the namespace my-namespace, other Pods can call:

my-service.my-namespace.svc.cluster.localThe traffic flow looks like this:

Pod (caller) → DNS resolves to ClusterIP → kube-proxy → target PodThe ClusterIP acts as a stable entry point. Even if the Pods behind the Service are replaced or rescheduled, the ClusterIP stays the same and kube-proxy routes traffic to whichever Pods are currently healthy.

Understanding NodePort

A NodePort Service exposes your application on every Node's IP at a fixed port:

<Node IP>:<NodePort>But what exactly is a "Node"? That depends on your cluster.

Nodes in k3d (local development)

In a k3d cluster, a Node is a Docker container acting as a machine. This is the

Docker container where Kubernetes (k3s) runs. You can prove this by running docker ps

— you'll see something like k3d-user-management-local-server-0.

Your Laptop (WSL)

↓

Docker

↓

[ k3d node container ] ← acts like a VM (this is your "node")

↓

Kubernetes (k3s)

↓

Pods

↓

Containers (your apps)Nodes in AKS (production)

In an AKS cluster, a Node is a real Azure VM. When you apply a Deployment manifest, Kubernetes creates multiple Pods via a ReplicaSet, and each Pod is independently scheduled onto available Nodes based on resource availability. Kubernetes spreads Pods across Nodes so that if one Node dies, the remaining Nodes keep the system running.

How NodePort Access Differs by Environment

In AKS, if you create a NodePort Service like this:

type: NodePort

ports:

- port: 80

nodePort: 30007

You can access the service directly at <Node-VM-IP>:30007 from anywhere that

can reach the VM's network.

In k3d, the NodePort exists inside the k3d container, but the container is

not your laptop. The Node has an internal Docker IP (e.g. 172.19.0.2)

that your browser can't reach directly. k3d maps it to your localhost instead, so you access it as:

[ Browser / WSL ]

↓

localhost:30007 (you type this)

↓

Docker port mapping

↓

k3d node container (172.19.0.2)

↓

NodePort (30007)

↓

kube-proxy

↓

PodWorking with NodePorts

Create a Service that exposes the Deployment named user-management-app:

kubectl expose deployment/user-management-app --type="NodePort" --port 8082 --name=user-management-svc-nodeport -n user-management-app

This creates a Service of type NodePort to expose the user-management-app

Deployment. The name of this service is user-management-svc-nodeport.

Kubernetes assigns a port on each Node (in the range 30000–32767), and forwards

traffic to port 8082 in the Pods.

kubectl get svc user-management-svc-nodeport -n user-management-app

# Example output:

# NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

# user-management-svc-nodeport NodePort 10.43.56.235 none 8082:31776/TCP 6m

# The "31776" is the NodePort assigned by Kubernetes.To access your application from outside the cluster:

- Use the Node IP of your Kubernetes node plus the NodePort.

- Check the node IP using

kubectl get nodes -o wide:kubectl get nodes -o wide # Example output: # NAME STATUS ROLES AGE VERSION INTERNAL-IP EXTERNAL-IP # k3d-user-management-local-server-0 Ready control-plane,master 16d v1.31.5+k3s1 172.19.0.4 <none> - In WSL2, the node's INTERNAL-IP (

172.19.0.4) is inside the Docker network and usually not reachable from Windows. - Instead, use the WSL2 host IP (from

hostname -I), e.g.172.26.41.222:hostname -I # Example output: # 172.26.41.222 172.119.0.1

Example URL from Windows or outside the WSL2 VM:

http://172.26.41.222:31776

Port Forwarding — The Easy Way to Test Any Service

Whether you have a ClusterIP or NodePort Service, you can always use kubectl port-forward

to access it from your local machine without worrying about Node IPs or Docker port mappings:

kubectl port-forward svc/user-management-svc 8082:8082 -n user-management-app

Then open http://localhost:8082. This creates a temporary tunnel from your machine

directly into the cluster, bypassing all the NodePort networking complexity.

- svc/ port-forward (service-level) follows the Service selector and will load-balance between Pods matching the selector.

- deploy/ port-forward (deployment/pod-level) forwards directly to a single Pod resolved from the Deployment. Use it when you want to debug a specific Pod instance.

Working with Ingress

Ingress allows you to expose multiple services under the same host or domain using HTTP(S) routes. This is useful when you want to route traffic to multiple applications inside the same cluster without exposing each Service individually.

In this example, we have installed Curity in the curity namespace. The runtime and admin pods are

running, and we want to make these services accessible from outside the cluster (e.g., from other clusters or your

host machine).

Example Ingress YAML

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: curity-ingress

namespace: curity

annotations:

kubernetes.io/ingress.class: traefik # Tells Kubernetes which ingress controller should handle this Ingress

spec:

ingressClassName: traefik # Also specifies the ingress controller

rules:

- host: curity.local # The hostname to access services

http:

paths:

- path: /runtime # Route traffic with /runtime prefix

pathType: Prefix

backend:

service:

name: idsvr-tutorial-runtime-svc # Service to route traffic to

port:

number: 8443

- path: /admin # Route traffic with /admin prefix

pathType: Prefix

backend:

service:

name: idsvr-tutorial-admin-svc # Service to route traffic to

port:

number: 6749

To apply the Ingress, ensure you use the correct namespace:

kubectl apply -f curity-ingress.yaml -n curityAccessing your services via Ingress

- In WSL2, the Linux VM has its own network namespace. Your cluster's Node IP (e.g.,

172.19.0.4) is usually internal to Docker/k3d and not directly reachable from Windows. - The WSL2 host IP (from

hostname -I, e.g.,172.26.41.222) is reachable from your Windows host. Adding this IP with the hostnamecurity.localin the hosts file allows your browser to resolve the domain correctly:- In Windows, edit

C:\Windows\System32\drivers\etc\hosts(admin rights) and add:172.26.41.222 curity.local - In WSL/Linux apps (optional), you can add the same line to

/etc/hostsif testing from inside WSL.

- In Windows, edit

- Once the host resolves, you can access the services in your browser. The Ingress controller (Traefik) will

route traffic to the correct service inside the cluster:

- Runtime service:

https://curity.local/runtime - Admin service:

https://curity.local/admin

- Runtime service:

- Tip: If you encounter HTTPS certificate warnings, accept the self-signed certificate for testing purposes.

Accessing from inside the same cluster

From a Pod inside the same cluster, you don't need to go through the Ingress hostname unless you want to test the exact external route. The most common and efficient way to access services inside the cluster is via the Service DNS.

What is Service DNS?

Kubernetes automatically gives every Service a DNS name in the form

<service-name>.<namespace>.svc.cluster.local.

To check the services in the curity namespace:

kubectl get svc -n curity

# Example output:

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

idsvr-tutorial-admin-svc ClusterIP 10.43.209.141 <none> 6789/TCP,6790/TCP,4465/TCP,4466/TCP,6749/TCP 2d22h

idsvr-tutorial-runtime-svc ClusterIP 10.43.66.149 <none> 8443/TCP,4465/TCP,4466/TCP 2d22h

- Admin Service:

http://idsvr-tutorial-admin-svc.curity.svc.cluster.local:6789 - Runtime Service:

http://idsvr-tutorial-runtime-svc.curity.svc.cluster.local:8443

Checking the Ingress Controller

You need to know which Ingress controller is installed (Traefik, Nginx, etc.) so your

ingressClassName matches it.

kubectl get ingressclass

# Example output:

NAME CONTROLLER PARAMETERS AGE

traefik traefik.io/ingress-controller none 16d

You can also describe the IngressClass to see which controller handles a given class:

kubectl describe ingressclass traefikKey points

- Both the Ingress and the target Services must be in the correct namespace (

curityin this case). - Ingress supports TLS termination, authentication, and other routing features.

- Using Ingress, you can make multiple services in one namespace accessible via a single host or domain, which is cleaner than exposing each Service with a NodePort.

Example use case:

- You have installed Curity runtime and admin pods in the

curitynamespace. - Other clusters or your Windows host need to access these services without exposing NodePorts.

- You create an Ingress resource that maps

/runtimeand/adminpaths to the correct Services. Now clients can access:- Runtime:

https://curity.local/runtime - Admin:

https://curity.local/admin

- Runtime:

Setting Up a Local k3d Cluster and NGINX Ingress

In the previous section, you learned how to expose services using the Traefik Ingress Controller. Here, let's explore the same concept using the NGINX Ingress Controller and a new demo application.

Create a new k3d cluster

k3d cluster create demo-test \

--api-port 6551 \

-p "80:80@loadbalancer" \

-p "443:443@loadbalancer" \

--k3s-arg "--disable=traefik@server:0"

Notes:

-

--api-port 6551exposes the Kubernetes API server on port6551of your local machine. This allowskubectland other Kubernetes clients on your host to communicate with the cluster. -

--k3s-arg "--disable=traefik@server:0"disables the default Traefik Ingress Controller that comes preinstalled with k3s. This ensures there's no conflict when you later install the NGINX Ingress Controller manually.

Check that the cluster is running:

kubectl get nodesInstall the NGINX Ingress Controller

sudo apt install -y helm

helm repo add ingress-nginx https://kubernetes.github.io/ingress-nginx

helm repo update

helm install nginx-ingress ingress-nginx/ingress-nginx \

--namespace demo-nginx-ingress \

--create-namespace \

--set controller.publishService.enabled=true

Explanation:

-

helm repo add ingress-nginx https://kubernetes.github.io/ingress-nginx

-ingress-nginxis the Helm repository name you assign locally. -https://kubernetes.github.io/ingress-nginxis the official Helm chart repository URL for the NGINX Ingress Controller maintained by the Kubernetes community. - This command tells Helm where to find and download the NGINX Ingress Controller chart. -

helm install nginx-ingress ingress-nginx/ingress-nginx

-nginx-ingressis the release name — a name you choose to identify this installation of the chart within your cluster. -ingress-nginx/ingress-nginxrefers to the chart path:- The first

ingress-nginxrefers to the repository you added earlier. - The second

ingress-nginxis the actual chart name inside that repository.

ingress-nginxchart from theingress-nginxrepo, and call this deploymentnginx-ingress." - The first

-

--namespace demo-nginx-ingress— Creates and deploys into a dedicated namespace for the ingress controller. -

--set controller.publishService.enabled=true— Ensures NGINX advertises its external IP through a Service, so external clients can route traffic correctly.

Deploy a sample application to test routing

Save the following as demo-app-deployment.yaml:

apiVersion: apps/v1

kind: Deployment

metadata:

name: demo-app

namespace: demo-app

spec:

replicas: 1

selector:

matchLabels:

app: demo-app

template:

metadata:

labels:

app: demo-app

spec:

containers:

- name: demo-app

image: hashicorp/http-echo

args:

- "-text=Hello from NGINX Ingress!"

ports:

- containerPort: 5678

---

apiVersion: v1

kind: Service

metadata:

name: demo-app

namespace: demo-app

spec:

selector:

app: demo-app

ports:

- port: 80

targetPort: 5678

Apply the manifest:

kubectl apply -f demo-app-deployment.yamlCreate an Ingress rule for the sample app

Save the following as demo-ingress.yaml:

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: demo-ingress

namespace: demo-app

spec:

ingressClassName: nginx

rules:

- host: demo.local

http:

paths:

- path: /

pathType: Prefix

backend:

service:

name: demo-app

port:

number: 80

Apply the ingress:

kubectl apply -f demo-ingress.yaml

Edit your hosts file

To make demo.local resolvable:

- Inside WSL/Linux:

sudo nano /etc/hosts # Add the following line: 127.0.0.1 demo.local - In Windows:

C:\Windows\System32\drivers\etc\hosts # Add the following line: 127.0.0.1 demo.local

Test routing

- From WSL:

curl http://demo.local # Output: Hello from NGINX Ingress! - From a Windows browser:

Visit

http://demo.localafter adding the hosts entry.

Understanding the hosts file

The hosts file is a local DNS override file that maps domain names to IP addresses before your system queries any DNS server.

What happens when you add it:

- You type

http://demo.localin your browser. - Windows checks the hosts file first.

- It finds

demo.local→127.0.0.1. - The HTTP request is sent to

127.0.0.1(your local machine). - Flow:

Browser → 127.0.0.1:80 → k3d LoadBalancer → NGINX Ingress → demo-app

Service → External IP (Service + Endpoints)

Sometimes Pods inside your cluster need to communicate with a service running outside the cluster

(e.g., a legacy backend, a database on your host machine, or another service not containerized).

Kubernetes doesn't automatically route localhost from inside Pods to your Windows host — especially

when

running under WSL2 or k3d. Instead, you can use a Service + Endpoints pair to bridge traffic.

In this scenario, our curity-runtime Pod listens on port 8439.

It needs to call a backend service (ex: internal-scim) running on the Windows host at port 8088.

We expose that Windows service inside Kubernetes with a Service + Endpoints pair,

so Pods can call it like any other internal Service.

# external-backend.yaml

apiVersion: v1

kind: Service

metadata:

name: external-backend-svc

namespace: curity

spec:

type: ClusterIP # Default: makes the Service reachable only inside the cluster

ports:

- name: backend-api

port: 8088 # A port exposed external-backend-svc service inside the cluster (The Pods must connect to this port)

targetPort: 8088 # Forwards to this port on the backing endpoints. Must match the Endpoints port below

- name: backend-db

port: 27017

targetPort: 27017

---

apiVersion: v1

kind: Endpoints

metadata:

name: external-backend-svc # Must match the Service name exactly

namespace: curity

subsets:

- addresses:

- ip: 172.26.41.222 # External service IP (your Windows host IP from `hostname -I`)

ports:

- name: backend-api # Must match Service.spec.ports[].name above

port: 8088 # Port on the external host that provides the API

- name: backend-db

port: 27017

Namespace matters:

- Both the

ServiceandEndpointsmust be in the same namespace (e.g.,curity). - When applying YAML files, always use the correct namespace:

kubectl apply -f external-backend.yaml -n curity - If no namespace is given, Kubernetes will default to the

defaultnamespace and your Pods incuritymay not find the service.

Verify configuration:

kubectl get svc external-backend-svc -n curity -o wide

kubectl get endpoints external-backend-svc -n curity -o yaml

Testing the setup:

# Exec into the user-management Pod

kubectl exec -it deploy/user-management-app -n curity -- sh

# Inside the Pod, test the external backend call:

curl http://external-backend-svc:8088

If the setup is correct, this curl will hit the Windows service running at

172.26.41.222:8088, but from the Pod's perspective, it looks like a normal Kubernetes Service.

How this works:

- The

Servicedefines logical ports inside the cluster (8088, 27017). Pods in your cluster can call:- Within same namespace:

http://external-backend-svc:8088 - From another namespace:

http://external-backend-svc.curity.svc.cluster.local:8088

- Within same namespace:

- The

Endpointsobject maps those logical ports to an external IP address (in this case,172.26.41.222, your WSL2 host IP). This tells Kubernetes where to actually send traffic. - Kubernetes proxies requests:

Pod (8082 → outbound request) → Service (ClusterIP 8088) → Endpoints → External host IP (172.26.41.222:8088).

Why is this needed?

- Inside WSL2, the Linux VM has its own network namespace. Your cluster's Node IP (e.g.,

172.19.0.4) is usually internal to Docker/k3d and not directly reachable from Windows. - The WSL2 host IP (from

hostname -I, e.g.,172.26.41.222) is reachable from Windows and should be used in the Endpoints object. - Inside WSL2/k3d, Pods cannot reach Windows directly with

127.0.0.1orlocalhost, becauselocalhostinside the container refers to the container itself. - By creating a Service + Endpoints pair, you give Pods a stable DNS name inside the cluster while actually routing traffic outside to Windows or another machine.

- When Pods talk to the internet (like calling

https://api.github.com), they don't need this — cluster networking and NAT already handle external routing. This trick is only for routing to services bound to your host machine or private network IPs.

Example use case:

- You're running a MongoDB instance on Windows bound to

localhost:27017. - Your Pod in WSL2 needs to connect to that DB.

- You expose it in Kubernetes as

external-backend-svcand the Pod just connects tomongodb://external-backend-svc:27017.

Tip: On Windows + WSL2, get the reachable host IP with:

hostname -I # e.g., 172.26.41.222

Add that IP in your Endpoints object. If it changes (like after a reboot), you'll need to update the Endpoints.

For a more permanent solution, you can run a reverse proxy inside the cluster or use

host.docker.internal. But, this is supported only in Docker Desktop (Windows/Mac) and not supported

in Docker on Linux, k3d, WSL2 + k3d.

Key Difference - Deployment-backed Service vs External Service

Normally, a Kubernetes Service selects Pods using spec.selector. The

targetPort maps to the container port inside those Pods.

In the case of an external Service (using a Service + Endpoints pair), there are no

Pods.

Instead, you manually create an Endpoints object with an IP address and ports.

The Service simply forwards requests to whatever you define in the Endpoints object.

Here, targetPort must match the port numbers you defined in the Endpoints.

So the traffic flow is:

- Deployment-backed Service:

Service.port → Service.targetPort → Pod.containerPort - External Service:

Service.port → Service.targetPort → Endpoints.port

ConfigMaps — store non-sensitive configuration

ConfigMaps keep configuration separate from images. Two common usage patterns are: mount as files, or inject as environment variables.

Create a ConfigMap

# app-configmap.yaml

apiVersion: v1

kind: ConfigMap

metadata:

name: app-config

namespace: user-management-app

data:

APP_MODE: "production"

APP_VERSION: "1.0.0"

LOG_LEVEL: "debug"Scenario A — ConfigMap as environment variables

# deployment-configmap-env.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: configmap-env-deployment

namespace: user-management-app

spec:

replicas: 1

selector:

matchLabels:

app: configmap-env

template:

metadata:

labels:

app: configmap-env

spec:

containers:

- name: demo-container

image: busybox

command: ["sh","-c","env; sleep 3600"]

envFrom:

- configMapRef:

name: app-configApply & verify:

kubectl apply -f app-configmap.yaml

kubectl apply -f deployment-configmap-env.yaml

kubectl get pods -n user-management-app

kubectl exec -it <pod-name> -n user-management-app -- env | grep APP_MODEkubectl rollout restart deployment/<name> -n <ns>).

Scenario B — ConfigMap mounted as files

# deployment-configmap-file.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: configmap-file-deployment

namespace: user-management-app

spec:

replicas: 1

selector:

matchLabels:

app: configmap-file

template:

metadata:

labels:

app: configmap-file

spec:

containers:

- name: demo-container

image: busybox

command: ["sh","-c","cat /config/APP_MODE; sleep 3600"]

volumeMounts:

- name: config-volume

mountPath: /config

volumes:

- name: config-volume

configMap:

name: app-configApply & verify:

kubectl apply -f app-configmap.yaml

kubectl apply -f deployment-configmap-file.yaml

kubectl exec -it <pod-name> -n user-management-app -- cat /config/APP_MODESecrets — store sensitive data (with caution)

Secrets store sensitive strings (passwords, keys). They are base64-encoded when stored in YAML but are not encrypted in etcd unless you enable encryption at rest.

Create a Secret

echo -n 'user' | base64 # output: dXNlcg==

echo -n 'password' | base64 # output: cGFzc3dvcmQ=

# app-secret.yaml

apiVersion: v1

kind: Secret

metadata:

name: app-secret

namespace: user-management-app

type: Opaque

data:

DB_USER: dXNlcg==

DB_PASSWORD: cGFzc3dvcmQ=Scenario A — Secret as environment variables

# deployment-secret-env.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: secret-env-deployment

namespace: user-management-app

spec:

replicas: 1

selector:

matchLabels:

app: secret-env

template:

metadata:

labels:

app: secret-env

spec:

containers:

- name: demo-container

image: busybox

command: ["sh","-c","env; sleep 3600"]

envFrom:

- secretRef:

name: app-secretApply & verify:

kubectl apply -f app-secret.yaml

kubectl apply -f deployment-secret-env.yaml

kubectl exec -it <pod-name> -n user-management-app -- printenv | grep DB_Scenario B — Secret mounted as files

# deployment-secret-file.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: secret-file-deployment

namespace: user-management-app

spec:

replicas: 1

selector:

matchLabels:

app: secret-file

template:

metadata:

labels:

app: secret-file

spec:

containers:

- name: demo-container

image: busybox

command: ["sh","-c","cat /secrets/DB_USER; cat /secrets/DB_PASSWORD; sleep 3600"]

volumeMounts:

- name: secret-volume

mountPath: /secrets

volumes:

- name: secret-volume

secret:

secretName: app-secretApply & verify:

kubectl apply -f app-secret.yaml

kubectl apply -f deployment-secret-file.yaml

kubectl exec -it <pod-name> -n user-management-app -- ls /secrets

kubectl exec -it <pod-name> -n user-management-app -- cat /secrets/DB_USERQuick verification checklist (copy & paste)

# Create namespaces

kubectl create namespace curity

kubectl create namespace user-management-app

# Apply configmap & secret (example)

kubectl apply -f app-configmap.yaml

kubectl apply -f app-secret.yaml

# Deploy example workloads

kubectl apply -f deployment-configmap-env.yaml

kubectl apply -f deployment-configmap-file.yaml

kubectl apply -f deployment-secret-env.yaml

kubectl apply -f deployment-secret-file.yaml

# User management app

kubectl apply -f user-management-deployment.yaml -n user-management-app

# Check pods

kubectl get pods -n curity

kubectl get pods -n user-management-app

# Verify ConfigMap via env

kubectl exec -it <pod-name> -n curity -- env | grep APP_MODE

# Verify ConfigMap via file

kubectl exec -it <pod-name> -n curity -- cat /config/APP_MODE

# Verify Secret via env

kubectl exec -it <pod-name> -n curity -- printenv | grep DB_PASSWORD

# Verify Secret via file

kubectl exec -it <pod-name> -n curity -- cat /secrets/DB_PASSWORD

# Verify service -> external endpoints

kubectl get svc scim-service -n curity -o yaml

kubectl get endpoints scim-service -n curity -o yamlFinal notes & recommendations

- Always check your current context:

kubectl config current-contextbefore applying manifests. - For production, enable encryption at rest for secrets in etcd and use an external secret store (HashiCorp Vault, AWS KMS, etc.).

- Prefer mounting config files if the app can re-read them; use env vars when configuration is simple or immutable at pod start.

- For local development, port-forwarding is convenient; for staging/production, prefer NodePort/LoadBalancer or Ingress controllers.